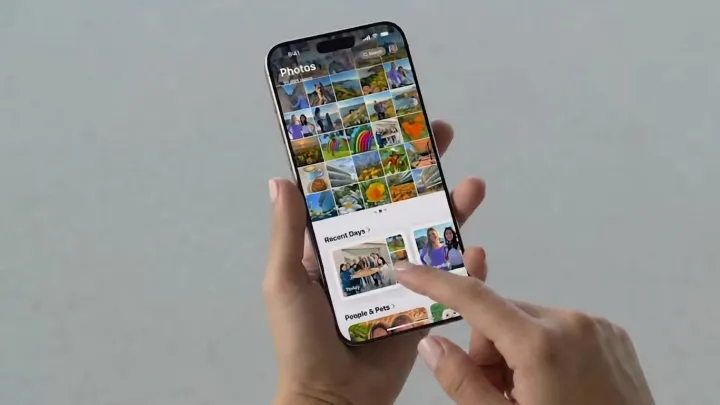

Software, not sensors, now defines the iPhone camera. With iOS 27, Apple is reportedly preparing AI photo tools that treat editing less like manual labor and more like a single tap, folding generative models directly into the Photos app rather than pushing users to third party services.

The headline move is background extension. Instead of cropping tighter, users will be able to pull the frame wider, as diffusion based models infer missing pixels at the edges and synthesize plausible detail around subjects. Image quality upgrades will lean on super resolution and denoising networks, sharpening low light shots and compressed images without forcing users to understand convolutional layers or training data pipelines. Reframing tools will automatically adjust composition, shifting subjects using semantic segmentation and depth maps so portraits and group shots match classic rules of focus and balance.

The more interesting bet is control. Apple is expected to keep these models running on device, leveraging its neural engine rather than cloud servers, which reduces latency and avoids shipping personal photos to remote data centers. That keeps the company’s privacy story intact while quietly resetting user expectations: the default edit button may soon mean asking an algorithm to imagine what the scene should have looked like, not what the camera actually captured.